A remarkable epidemic took place in 1969 at the College of the Holy Cross in Worcester, Massachusetts. In the autumn of that year, the college was forced to cancel the football season after the first two games due to an outbreak of hepatitis.

A remarkable epidemic took place in 1969 at the College of the Holy Cross in Worcester, Massachusetts. In the autumn of that year, the college was forced to cancel the football season after the first two games due to an outbreak of hepatitis. The first football game of that season was against Harvard and took place on September 27th; Holy Cross lost 13-0. The team appeared sluggish to fans, and one player missed the game due to fever. Michael Neagle described what happened next in a 2004 essay:

Players began dropping out during the week leading up to the team’s next game at Dartmouth [on October 4th]. What had been described as a “flu bug” by newspapers during the week was confirmed as hepatitis the day of the game. Eight players did not make the trip because of illness. Some got sick on the drive up. More were sidelined when they fell ill during the game . . .Holy Cross lost 38-6. There were interesting facets to this outbreak, as told in a 1972 Associated Press story:

The outbreak was somewhat puzzling because faculty members, the freshman football team, and others on the Worcester, Mass., campus before formal opening of classes were not affected. Food services were studied and did not produce suspicious leads.Neagle describes what was eventually pieced together:

. . . [the] season was doomed after just the second day of practice. On Aug. 29, a hot summer day in Worcester, on the practice fields where the Hart Center now stands, players drank water from a faucet that was later found to be contaminated with hepatitis. Though investigators almost immediately suspected the drinking fountain as the source of the illness, it took nearly a year to determine conclusively the sequence of events that led to the contamination.A 1972 study by Morse et al described the epidemiology of the event:

On that fateful day, firefighters battled a blaze on nearby Cambridge Street. This caused a drop in the water pressure, allowing ground water to seep into the practice field’s irrigation system. That ground water had been contaminated by a group of children living near the practice facility who were already infected with hepatitis. Once the players drank from the contaminated faucet, they too became infected.

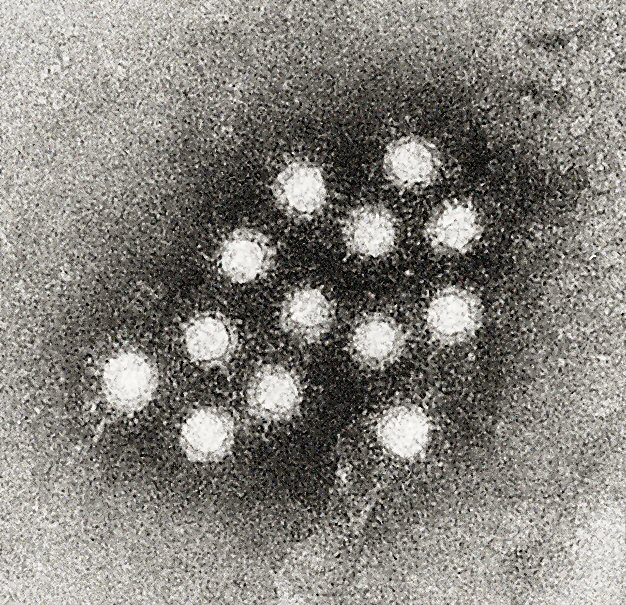

Of 97 persons exposed, 90 were infected, 32 experienced typical icteric [jaundice] disease, 22 were anicteric but symptomatic, and 36 asymptomatic players were recognized as having significantly elevated serum glutamic pyruvic transaminase values (> 100 units). Other athletes, using the same facilities but arriving six days after the established date of exposure, were unaffected. The decision to obtain blood samples from the entire team, as soon as the initial cases were recognized, resulted in the demonstration of an unexpectedly high attack rate of 93% . . .An attack rate of 93% is remarkable, but potentially consistent with a high inoculum that could have been delivered by contaminated water. Friedman et al returned to the event in a 1985 study. Using a radioimmunoassay to test stored serum samples for IgM antibody to hepatitis A virus, they found that

Only individuals with icteric hepatitis were found to have IgM anti-HAV in serum; those with presumed anicteric illness were shown not to be infected with hepatitis A virus. The attack rate was thus only 34%, not 93% as originally reported, and the incidence of icteric illness in those infected was 100%, not 33%.What made the other players sick thus remains a mystery, though one can speculate about potential pathogens in the environment that could have contaminated the practice field faucet given the negative pressure scenario. I'm always intrigued by disease events that seem so open-and-shut based on the technology of one era but less so when analyzed with the technology of another. This is one of those events.

(image source: Wikipedia)